[ad_1]

Fortuitously for such synthetic neural networks—later rechristened “deep studying” after they included additional layers of neurons—a long time of

Moore’s Law and different enhancements in pc {hardware} yielded a roughly 10-million-fold increase within the variety of computations that a pc may do in a second. So when researchers returned to deep studying within the late 2000s, they wielded instruments equal to the problem.

These more-powerful computer systems made it doable to assemble networks with vastly extra connections and neurons and therefore better means to mannequin advanced phenomena. Researchers used that means to interrupt document after document as they utilized deep studying to new duties.

Whereas deep studying’s rise could have been meteoric, its future could also be bumpy. Like Rosenblatt earlier than them, at this time’s deep-learning researchers are nearing the frontier of what their instruments can obtain. To grasp why this can reshape machine studying, you need to first perceive why deep studying has been so profitable and what it prices to maintain it that approach.

Deep studying is a contemporary incarnation of the long-running development in synthetic intelligence that has been transferring from streamlined programs primarily based on professional information towards versatile statistical fashions. Early AI programs had been rule primarily based, making use of logic and professional information to derive outcomes. Later programs integrated studying to set their adjustable parameters, however these had been normally few in quantity.

As we speak’s neural networks additionally be taught parameter values, however these parameters are a part of such versatile pc fashions that—if they’re sufficiently big—they turn into common operate approximators, which means they will match any kind of information. This limitless flexibility is the explanation why deep studying will be utilized to so many alternative domains.

The flexibleness of neural networks comes from taking the numerous inputs to the mannequin and having the community mix them in myriad methods. This implies the outputs will not be the results of making use of easy formulation however as a substitute immensely sophisticated ones.

For instance, when the cutting-edge image-recognition system

Noisy Student converts the pixel values of a picture into chances for what the thing in that picture is, it does so utilizing a community with 480 million parameters. The coaching to determine the values of such a lot of parameters is much more outstanding as a result of it was performed with just one.2 million labeled pictures—which can understandably confuse these of us who bear in mind from highschool algebra that we’re purported to have extra equations than unknowns. Breaking that rule seems to be the important thing.

Deep-learning fashions are overparameterized, which is to say they’ve extra parameters than there are information factors accessible for coaching. Classically, this could result in overfitting, the place the mannequin not solely learns basic developments but in addition the random vagaries of the information it was educated on. Deep studying avoids this entice by initializing the parameters randomly after which iteratively adjusting units of them to raised match the information utilizing a way referred to as stochastic gradient descent. Surprisingly, this process has been confirmed to make sure that the discovered mannequin generalizes properly.

The success of versatile deep-learning fashions will be seen in machine translation. For many years, software program has been used to translate textual content from one language to a different. Early approaches to this drawback used guidelines designed by grammar specialists. However as extra textual information turned accessible in particular languages, statistical approaches—ones that go by such esoteric names as most entropy, hidden Markov fashions, and conditional random fields—could possibly be utilized.

Initially, the approaches that labored finest for every language differed primarily based on information availability and grammatical properties. For instance, rule-based approaches to translating languages equivalent to Urdu, Arabic, and Malay outperformed statistical ones—at first. As we speak, all these approaches have been outpaced by deep studying, which has confirmed itself superior nearly all over the place it is utilized.

So the excellent news is that deep studying gives monumental flexibility. The unhealthy information is that this flexibility comes at an infinite computational price. This unlucky actuality has two elements.

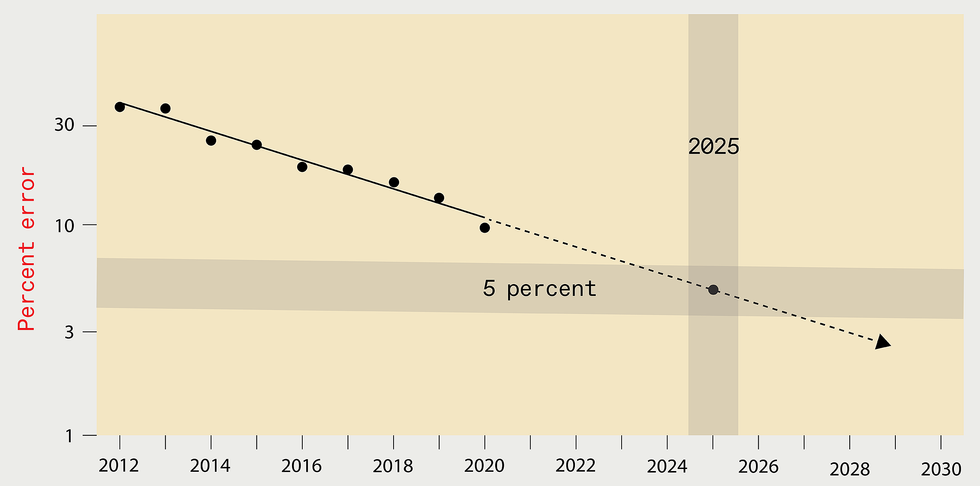

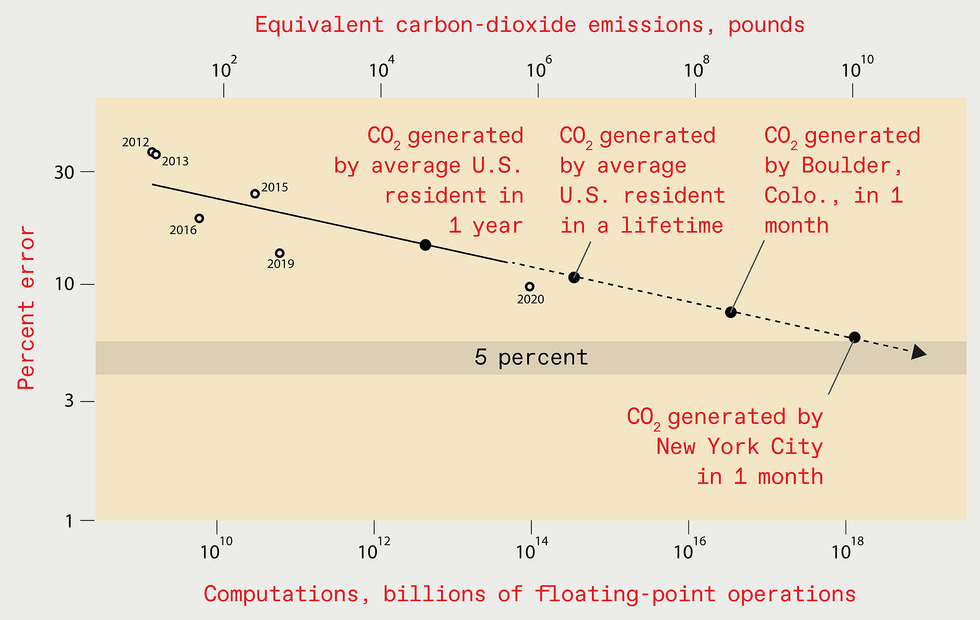

Extrapolating the positive aspects of latest years may recommend that by

2025 the error stage in the very best deep-learning programs designed

for recognizing objects within the ImageNet information set must be

decreased to simply 5 % [top]. However the computing sources and

power required to coach such a future system could be monumental,

resulting in the emission of as a lot carbon dioxide as New York

Metropolis generates in a single month [bottom].

SOURCE: N.C. THOMPSON, Okay. GREENEWALD, Okay. LEE, G.F. MANSO

The primary half is true of all statistical fashions: To enhance efficiency by an element of

okay, at the least okay2 extra information factors should be used to coach the mannequin. The second a part of the computational price comes explicitly from overparameterization. As soon as accounted for, this yields a complete computational price for enchancment of at the least okay4. That little 4 within the exponent could be very costly: A ten-fold enchancment, for instance, would require at the least a ten,000-fold improve in computation.

To make the flexibility-computation trade-off extra vivid, think about a state of affairs the place you are attempting to foretell whether or not a affected person’s X-ray reveals most cancers. Suppose additional that the true reply will be discovered when you measure 100 particulars within the X-ray (typically referred to as variables or options). The problem is that we do not know forward of time which variables are vital, and there could possibly be a really giant pool of candidate variables to contemplate.

The expert-system method to this drawback could be to have people who find themselves educated in radiology and oncology specify the variables they assume are vital, permitting the system to look at solely these. The flexible-system method is to check as most of the variables as doable and let the system work out by itself that are vital, requiring extra information and incurring a lot increased computational prices within the course of.

Fashions for which specialists have established the related variables are in a position to be taught rapidly what values work finest for these variables, doing so with restricted quantities of computation—which is why they had been so standard early on. However their means to be taught stalls if an professional hasn’t accurately specified all of the variables that must be included within the mannequin. In distinction, versatile fashions like deep studying are much less environment friendly, taking vastly extra computation to match the efficiency of professional fashions. However, with sufficient computation (and information), versatile fashions can outperform ones for which specialists have tried to specify the related variables.

Clearly, you will get improved efficiency from deep studying when you use extra computing energy to construct larger fashions and practice them with extra information. However how costly will this computational burden turn into? Will prices turn into sufficiently excessive that they hinder progress?

To reply these questions in a concrete approach,

we recently gathered data from greater than 1,000 analysis papers on deep studying, spanning the areas of picture classification, object detection, query answering, named-entity recognition, and machine translation. Right here, we’ll solely focus on picture classification intimately, however the classes apply broadly.

Over time, lowering image-classification errors has include an infinite enlargement in computational burden. For instance, in 2012

AlexNet, the mannequin that first confirmed the facility of coaching deep-learning programs on graphics processing items (GPUs), was educated for 5 to 6 days utilizing two GPUs. By 2018, one other mannequin, NASNet-A, had lower the error charge of AlexNet in half, but it surely used greater than 1,000 instances as a lot computing to attain this.

Our evaluation of this phenomenon additionally allowed us to match what’s really occurred with theoretical expectations. Idea tells us that computing must scale with at the least the fourth energy of the advance in efficiency. In observe, the precise necessities have scaled with at the least the

ninth energy.

This ninth energy implies that to halve the error charge, you possibly can anticipate to wish greater than 500 instances the computational sources. That is a devastatingly excessive worth. There could also be a silver lining right here, nevertheless. The hole between what’s occurred in observe and what principle predicts may imply that there are nonetheless undiscovered algorithmic enhancements that would significantly enhance the effectivity of deep studying.

To halve the error charge, you possibly can anticipate to wish greater than 500 instances the computational sources.

As we famous, Moore’s Regulation and different {hardware} advances have supplied huge will increase in chip efficiency. Does this imply that the escalation in computing necessities does not matter? Sadly, no. Of the 1,000-fold distinction within the computing utilized by AlexNet and NASNet-A, solely a six-fold enchancment got here from higher {hardware}; the remaining got here from utilizing extra processors or operating them longer, incurring increased prices.

Having estimated the computational cost-performance curve for picture recognition, we will use it to estimate how a lot computation could be wanted to achieve much more spectacular efficiency benchmarks sooner or later. For instance, reaching a 5 % error charge would require 10

19 billion floating-point operations.

Important work by students on the College of Massachusetts Amherst permits us to know the financial price and carbon emissions implied by this computational burden. The solutions are grim: Coaching such a mannequin would price US $100 billion and would produce as a lot carbon emissions as New York Metropolis does in a month. And if we estimate the computational burden of a 1 % error charge, the outcomes are significantly worse.

Is extrapolating out so many orders of magnitude an inexpensive factor to do? Sure and no. Actually, it is very important perceive that the predictions aren’t exact, though with such eye-watering outcomes, they do not have to be to convey the general message of unsustainability. Extrapolating this fashion

would be unreasonable if we assumed that researchers would comply with this trajectory all the best way to such an excessive consequence. We do not. Confronted with skyrocketing prices, researchers will both need to give you extra environment friendly methods to resolve these issues, or they’ll abandon engaged on these issues and progress will languish.

However, extrapolating our outcomes isn’t solely affordable but in addition vital, as a result of it conveys the magnitude of the problem forward. The vanguard of this drawback is already turning into obvious. When Google subsidiary

DeepMind educated its system to play Go, it was estimated to have cost $35 million. When DeepMind’s researchers designed a system to play the StarCraft II video game, they purposefully did not strive a number of methods of architecting an vital element, as a result of the coaching price would have been too excessive.

At

OpenAI, an vital machine-learning assume tank, researchers just lately designed and educated a much-lauded deep-learning language system called GPT-3 at the price of greater than $4 million. Though they made a mistake after they applied the system, they did not repair it, explaining merely in a complement to their scholarly publication that “due to the cost of training, it wasn’t feasible to retrain the model.”

Even companies exterior the tech business at the moment are beginning to draw back from the computational expense of deep studying. A big European grocery store chain just lately deserted a deep-learning-based system that markedly improved its means to foretell which merchandise could be bought. The corporate executives dropped that try as a result of they judged that the price of coaching and operating the system could be too excessive.

Confronted with rising financial and environmental prices, the deep-learning neighborhood might want to discover methods to extend efficiency with out inflicting computing calls for to undergo the roof. If they do not, progress will stagnate. However do not despair but: A lot is being performed to handle this problem.

One technique is to make use of processors designed particularly to be environment friendly for deep-learning calculations. This method was extensively used over the past decade, as CPUs gave method to GPUs and, in some circumstances, field-programmable gate arrays and application-specific ICs (together with Google’s

Tensor Processing Unit). Basically, all of those approaches sacrifice the generality of the computing platform for the effectivity of elevated specialization. However such specialization faces diminishing returns. So longer-term positive aspects would require adopting wholly completely different {hardware} frameworks—maybe {hardware} that’s primarily based on analog, neuromorphic, optical, or quantum programs. Up to now, nevertheless, these wholly completely different {hardware} frameworks have but to have a lot influence.

We should both adapt how we do deep studying or face a way forward for a lot slower progress.

One other method to lowering the computational burden focuses on producing neural networks that, when applied, are smaller. This tactic lowers the price every time you employ them, but it surely typically will increase the coaching price (what we have described to date on this article). Which of those prices issues most is dependent upon the state of affairs. For a extensively used mannequin, operating prices are the most important element of the full sum invested. For different fashions—for instance, people who incessantly have to be retrained— coaching prices could dominate. In both case, the full price should be bigger than simply the coaching by itself. So if the coaching prices are too excessive, as we have proven, then the full prices will likely be, too.

And that is the problem with the varied techniques which were used to make implementation smaller: They do not scale back coaching prices sufficient. For instance, one permits for coaching a big community however penalizes complexity throughout coaching. One other includes coaching a big community after which “prunes” away unimportant connections. Yet one more finds as environment friendly an structure as doable by optimizing throughout many fashions—one thing referred to as neural-architecture search. Whereas every of those strategies can supply vital advantages for implementation, the consequences on coaching are muted—actually not sufficient to handle the issues we see in our information. And in lots of circumstances they make the coaching prices increased.

One up-and-coming approach that would scale back coaching prices goes by the identify meta-learning. The concept is that the system learns on a wide range of information after which will be utilized in lots of areas. For instance, somewhat than constructing separate programs to acknowledge canines in pictures, cats in pictures, and vehicles in pictures, a single system could possibly be educated on all of them and used a number of instances.

Sadly, latest work by

Andrei Barbu of MIT has revealed how onerous meta-learning will be. He and his coauthors confirmed that even small variations between the unique information and the place you wish to use it could severely degrade efficiency. They demonstrated that present image-recognition programs rely closely on issues like whether or not the thing is photographed at a selected angle or in a selected pose. So even the easy job of recognizing the identical objects in numerous poses causes the accuracy of the system to be almost halved.

Benjamin Recht of the College of California, Berkeley, and others made this level much more starkly, exhibiting that even with novel information units purposely constructed to imitate the unique coaching information, efficiency drops by greater than 10 %. If even small adjustments in information trigger giant efficiency drops, the information wanted for a complete meta-learning system could be monumental. So the nice promise of meta-learning stays removed from being realized.

One other doable technique to evade the computational limits of deep studying could be to maneuver to different, maybe as-yet-undiscovered or underappreciated varieties of machine studying. As we described, machine-learning programs constructed across the perception of specialists will be far more computationally environment friendly, however their efficiency cannot attain the identical heights as deep-learning programs if these specialists can’t distinguish all of the contributing components.

Neuro-symbolic strategies and different strategies are being developed to mix the facility of professional information and reasoning with the pliability typically present in neural networks.

Just like the state of affairs that Rosenblatt confronted on the daybreak of neural networks, deep studying is at this time turning into constrained by the accessible computational instruments. Confronted with computational scaling that will be economically and environmentally ruinous, we should both adapt how we do deep studying or face a way forward for a lot slower progress. Clearly, adaptation is preferable. A intelligent breakthrough may discover a method to make deep studying extra environment friendly or pc {hardware} extra {powerful}, which might enable us to proceed to make use of these terribly versatile fashions. If not, the pendulum will possible swing again towards relying extra on specialists to establish what must be discovered.

From Your Web site Articles

Associated Articles Across the Internet

[ad_2]

Source