[ad_1]

Synthetic intelligence has reached deep into our lives, although you may be arduous pressed to level to apparent examples of it. Amongst numerous different behind-the-scenes chores, neural networks energy our digital assistants, make on-line procuring suggestions, acknowledge individuals in our snapshots, scrutinize our banking transactions for proof of fraud, transcribe our voice messages, and weed out hateful social-media postings. What these purposes have in frequent is that they contain studying and working in a constrained, predictable setting.

However embedding AI extra firmly into our endeavors and enterprises poses an amazing problem. To get to the following stage, researchers are attempting to fuse AI and robotics to create an intelligence that may make selections and management a bodily physique within the messy, unpredictable, and unforgiving actual world. It is a doubtlessly revolutionary goal that has caught the eye of a few of the strongest tech-research organizations on the planet. “I might say that robotics as a subject might be 10 years behind the place laptop imaginative and prescient is,” says

Raia Hadsell, head of robotics at DeepMind, Google’s London-based AI companion. (Each corporations are subsidiaries of Alphabet.)

Even for

Google, the challenges are daunting. Some are arduous however easy: For many robotic purposes, it is tough to collect the large knowledge units which have pushed progress in different areas of AI. However some issues are extra profound, and relate to longstanding conundrums in AI. Issues like, how do you study a brand new activity with out forgetting the outdated one? And the way do you create an AI that may apply the talents it learns for a brand new activity to the duties it has mastered earlier than?

Success would imply opening AI to new classes of software. Most of the issues we most fervently need AI to do—drive vehicles and vehicles, work in nursing properties, clear up after disasters, carry out primary family chores, construct homes, sow, nurture, and harvest crops—could possibly be achieved solely by robots which can be rather more refined and versatile than those we’ve got now.

Past opening up doubtlessly huge markets, the work bears instantly on issues of profound significance not only for robotics however for all AI analysis, and certainly for our understanding of our personal intelligence.

Let’s begin with the prosaic drawback first. A neural network is barely pretty much as good as the standard and amount of the information used to coach it. The supply of huge knowledge units has been key to the current successes in AI: Picture-recognition software program is skilled on hundreds of thousands of labeled photos. AlphaGo, which beat a grandmaster on the historical board recreation of Go, was skilled on an information set of lots of of 1000’s of human video games, and on the hundreds of thousands of video games it performed towards itself in simulation.

To coach a robotic, although, such large knowledge units are unavailable. “This can be a drawback,” notes Hadsell. You may simulate 1000’s of video games of Go in a couple of minutes, run in parallel on lots of of CPUs. But when it takes 3 seconds for a robotic to choose up a cup, then you may solely do it 20 occasions per minute per robotic. What’s extra, in case your image-recognition system will get the primary million photos incorrect, it may not matter a lot. But when your bipedal robotic falls over the primary 1,000 occasions it tries to stroll, then you definitely’ll have a badly dented robotic, if not worse.

The issue of real-world knowledge is—a minimum of for now—insurmountable. However that is not stopping DeepMind from gathering all it will probably, with robots always whirring in its labs. And throughout the sector, robotics researchers are attempting to get round this paucity of information with a method known as sim-to-real.

The San Francisco-based lab

OpenAI not too long ago exploited this technique in coaching a robotic hand to resolve a Rubik’s Dice. The researchers constructed a digital setting containing a dice and a digital mannequin of the robotic hand, and skilled the AI that will run the hand within the simulation. Then they put in the AI in the actual robotic hand, and gave it an actual Rubik’s Dice. Their sim-to-real program enabled the bodily robotic to resolve the bodily puzzle.

Regardless of such successes, the approach has main limitations, Hadsell says, noting that AI researcher and roboticist

Rodney Brooks “likes to say that simulation is ‘doomed to succeed.’ ” The difficulty is that simulations are too good, too faraway from the complexities of the actual world. “Think about two robotic palms in simulation, attempting to place a cellphone collectively,” Hadsell says. Should you permit them to strive hundreds of thousands of occasions, they may ultimately uncover that by throwing all of the items up within the air with precisely the correct amount of power, with precisely the correct amount of spin, that they’ll construct the cellphone in a number of seconds: The items fall down into place exactly the place the robotic needs them, making a telephone. Which may work within the completely predictable setting of a simulation, however it might by no means work in complicated, messy actuality. For now, researchers must accept these imperfect simulacrums. “You may add noise and randomness artificially,” Hadsell explains, “however no modern simulation is nice sufficient to really recreate even a small slice of actuality.”

Catastrophic forgetting: When an AI learns a brand new activity, it has an unlucky tendency to neglect all of the outdated ones.

There are extra profound issues. The one which Hadsell is most desirous about is that of catastrophic forgetting: When an AI learns a brand new activity, it has an unlucky tendency to neglect all of the outdated ones.

The issue is not lack of information storage. It is one thing inherent in how most fashionable AIs study. Deep studying, the commonest class of synthetic intelligence at present, is predicated on neural networks that use neuronlike computational nodes, organized in layers, which can be linked collectively by synapselike connections.

Earlier than it will probably carry out a activity, reminiscent of classifying a picture as that of both a cat or a canine, the neural community should be skilled. The primary layer of nodes receives an enter picture of both a cat or a canine. The nodes detect numerous options of the picture and both hearth or keep quiet, passing these inputs on to a second layer of nodes. Every node in every layer will hearth if the enter from the layer earlier than is excessive sufficient. There will be many such layers, and on the finish, the final layer will render a verdict: “cat” or “canine.”

Every connection has a unique “weight.” For instance, node A and node B would possibly each feed their output to node C. Relying on their alerts, C might then hearth, or not. Nonetheless, the A-C connection might have a weight of three, and the B-C connection a weight of 5. On this case, B has better affect over C. To provide an implausibly oversimplified instance, A would possibly hearth if the creature within the picture has sharp tooth, whereas B would possibly hearth if the creature has a protracted snout. Because the size of the snout is extra useful than the sharpness of the tooth in distinguishing canine from cats, C pays extra consideration to B than it does to A.

Every node has a threshold over which it should hearth, sending a sign to its personal downstream connections. For instance C has a threshold of seven. Then if solely A fires, it should keep quiet; if solely B fires, it should keep quiet; but when A and B hearth collectively, their alerts to C will add as much as 8, and C will hearth, affecting the following layer.

What does all this must do with coaching? Any studying scheme should be capable of distinguish between appropriate and incorrect responses and enhance itself accordingly. If a neural community is proven an image of a canine, and it outputs “canine,” then the connections that fired might be strengthened; people who didn’t might be weakened. If it incorrectly outputs “cat,” then the reverse occurs: The connections that fired might be weakened; people who didn’t might be strengthened.

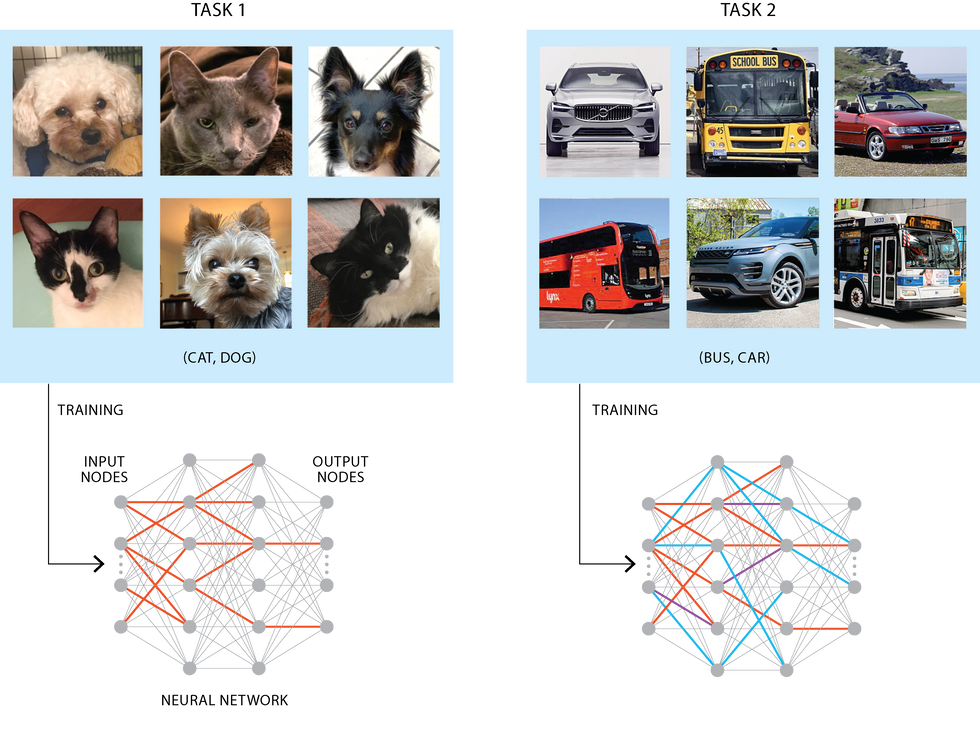

Coaching of a neural community to differentiate whether or not {a photograph} is of a cat or a canine makes use of a portion of the nodes and connections within the community [shown in red, at left]. Utilizing a method known as elastic weight consolidation, the community can then be skilled on a unique activity, distinguishing photos of vehicles from buses. The important thing connections from the unique activity are “frozen” and new connections are established [blue, at right]. A small fraction of the frozen connections, which might in any other case be used for the second activity, are unavailable [purple, right diagram]. That barely reduces efficiency on the second activity.

However think about you’re taking your dog-and-cat-classifying neural community, and now begin coaching it to differentiate a bus from a automotive. All its earlier coaching might be ineffective. Its outputs in response to car photos might be random at first. However as it’s skilled, it should reweight its connections and progressively grow to be efficient. It’s going to ultimately be capable of classify buses and vehicles with nice accuracy. At this level, although, in the event you present it an image of a canine, all of the nodes may have been reweighted, and it’ll have “forgotten” all the things it discovered beforehand.

That is catastrophic forgetting, and it is a big a part of the explanation that programming neural networks with humanlike versatile intelligence is so tough. “One among our traditional examples was coaching an agent to play

Pong,” says Hadsell. You can get it taking part in in order that it will win each recreation towards the pc 20 to zero, she says; however in the event you perturb the weights just a bit bit, reminiscent of by coaching it on Breakout or Pac-Man, “then the efficiency will—boop!—go off a cliff.” All of a sudden it should lose 20 to zero each time.

This weak point poses a serious stumbling block not just for machines constructed to succeed at a number of completely different duties, but additionally for any AI methods that should adapt to altering circumstances on the planet round them, studying new methods as obligatory.

There are methods round the issue. An apparent one is to easily silo off every ability. Prepare your neural community on one activity, save its community’s weights to its knowledge storage, then prepare it on a brand new activity, saving these weights elsewhere. Then the system want solely acknowledge the kind of problem on the outset and apply the right set of weights.

However that technique is proscribed. For one factor, it isn’t scalable. If you wish to construct a robotic able to carrying out many duties in a broad vary of environments, you’d have to coach it on each single one among them. And if the setting is unstructured, you will not even know forward of time what a few of these duties might be. One other drawback is that this technique does not let the robotic switch the talents that it acquired fixing activity A over to activity B. Such a capability to switch data is a crucial hallmark of human studying.

Hadsell’s most well-liked method is one thing known as “elastic weight consolidation.” The gist is that, after studying a activity, a neural community will assess which of the synapselike connections between the neuronlike nodes are a very powerful to that activity, and it’ll partially freeze their weights. “There will be a comparatively small quantity,” she says. “Say, 5 p.c.” Then you definately shield these weights, making them tougher to vary, whereas the opposite nodes can study as typical. Now, when your Pong-playing AI learns to play Pac-Man, these neurons most related to Pong will keep principally in place, and it’ll proceed to do effectively sufficient on Pong. It may not preserve successful by a rating of 20 to zero, however presumably by 18 to 2.

Raia Hadsell [top] leads a workforce of roboticists at DeepMind in London. At OpenAI, researchers used simulations to coach a robotic hand [above] to resolve a Rubik’s Dice.High: DeepMind; Backside: OpenAI

There’s an apparent aspect impact, nonetheless. Every time your neural community learns a activity, extra of its neurons will grow to be inelastic. If

Pong fixes some neurons, and Breakout fixes some extra, “ultimately, as your agent goes on studying Atari video games, it may get increasingly mounted, much less and fewer plastic,” Hadsell explains.

That is roughly just like human studying. Once we’re younger, we’re unbelievable at studying new issues. As we age, we get higher on the issues we’ve got discovered, however discover it tougher to study new abilities.

“Infants begin out having a lot denser connections which can be a lot weaker,” says Hadsell. “Over time, these connections grow to be sparser however stronger. It permits you to have reminiscences, however it additionally limits your studying.” She speculates that one thing like this would possibly assist clarify why very younger youngsters haven’t any reminiscences: “Our mind format merely does not help it.” In a really younger baby, “all the things is being catastrophically forgotten on a regular basis, as a result of all the things is linked and nothing is protected.”

The loss-of-elasticity drawback is, Hadsell thinks, fixable. She has been working with the DeepMind workforce since 2018 on a method known as “progress and compress.” It entails combining three comparatively current concepts in machine studying: progressive neural networks, data distillation, and elastic weight consolidation, described above.

Progressive neural networks are a simple means of avoiding catastrophic forgetting. As a substitute of getting a single neural community that trains on one activity after which one other, you’ve got one neural community that trains on a activity—say, Breakout. Then, when it has completed coaching, it freezes its connections in place, strikes that neural community into storage, and creates a brand new neural community to coach on a brand new activity—say, Pac-Man. Its data of every of the sooner duties is frozen in place, so can’t be forgotten. And when every new neural community is created, it brings over connections from the earlier video games it has skilled on, so it will probably switch abilities ahead from outdated duties to new ones. However, Hadsell says, it has an issue: It might probably’t switch data the opposite means, from new abilities to outdated. “If I’m going again and play Breakout once more, I have never really discovered something from this [new] recreation,” she says. “There is no backwards switch.”

That is the place data distillation, developed by the British-Canadian laptop scientist

Geoffrey Hinton, is available in. It entails taking many various neural networks skilled on a activity and compressing them right into a single one, averaging their predictions. So, as a substitute of getting numerous neural networks, every skilled on a person recreation, you’ve got simply two: one which learns every new recreation, known as the “lively column,” and one which comprises all the training from earlier video games, averaged out, known as the “data base.” First the lively column is skilled on a brand new activity—the “progress” part—after which its connections are added to the data base, and distilled—the “compress” part. It helps to image the 2 networks as, actually, two columns. Hadsell does, and attracts them on the whiteboard for me as she talks.

If you wish to construct a robotic able to carrying out many duties in a broad vary of environments, you’d have to coach it on each single one among them.

The difficulty is, by utilizing data distillation to lump the various particular person neural networks of the progressive-neural-network system collectively, you’ve got introduced the issue of catastrophic forgetting again in. You will change all of the weights of the connections and render your earlier coaching ineffective. To cope with this, Hadsell provides in elastic weight consolidation: Every time the lively column transfers its studying a couple of explicit activity to the data base, it partially freezes the nodes most essential to that individual activity.

By having two neural networks, Hadsell’s system avoids the primary drawback with elastic weight consolidation, which is that each one its connections will ultimately freeze. The data base will be as giant as you want, so a number of frozen nodes will not matter. However the lively column itself will be a lot smaller, and smaller neural networks can study sooner and extra effectively than bigger ones. So the progress-and-compress mannequin, Hadsell says, will permit an AI system to switch abilities from outdated duties to new ones, and from new duties again to outdated ones, whereas by no means both catastrophically forgetting or turning into unable to study something new.

Different researchers are utilizing completely different methods to assault the catastrophic forgetting drawback; there are half a dozen or so avenues of analysis.

Ted Senator, a program supervisor on the Protection Superior Analysis Tasks Company (DARPA), leads a gaggle that’s utilizing one of the vital promising, a method known as inside replay. “It is modeled after theories of how the mind operates,” Senator explains, “notably the position of sleep in preserving reminiscence.”

The idea is that the human mind replays the day’s reminiscences, each whereas awake and asleep: It reactivates its neurons in comparable patterns to people who arose whereas it was having the corresponding expertise. This reactivation helps stabilize the patterns, which means that they don’t seem to be overwritten so simply. Inner replay does one thing comparable. In between studying duties, the neural community recreates patterns of connections and weights, loosely mimicking the awake-sleep cycle of human neural exercise. The approach has confirmed

quite effective at avoiding catastrophic forgetting.

There are various different hurdles to beat within the quest to carry embodied AI safely into our day by day lives. “We’ve made large progress in symbolic, data-driven AI,” says Thrishantha Nanayakkara, who works on robotics at Imperial Faculty London. “However relating to contact, we fail miserably. We do not have a robotic that we are able to belief to carry a hamster safely. We can not belief a robotic to be round an aged particular person or a baby.”

Nanayakkara factors out that a lot of the “processing” that permits animals to cope with the world does not occur within the mind, however slightly elsewhere within the physique. As an example, the form of the human ear canal works to separate out sound waves, primarily performing “the Fourier sequence in actual time.” In any other case that processing must occur within the mind, at a value of treasured microseconds. “If, while you hear issues, they’re not there, then you definitely’re not embedded within the setting,” he says. However most robots presently depend on CPUs to course of all of the inputs, a limitation that he believes must be surmounted earlier than substantial progress will be made.

You already know the cat isn’t going to study language, and I am okay with that.

His colleague

Petar Kormushev says one other drawback is proprioception, the robotic’s sense of its personal physicality. A robotic’s mannequin of its personal dimension and form is programmed in instantly by people. The issue is that when it picks up a heavy object, it has no means of updating its self-image. Once we choose up a hammer, we regulate our psychological mannequin of our physique’s form and weight, which lets us use the hammer as an extension of our physique. “It sounds ridiculous however they [robots] are usually not capable of replace their kinematic fashions,” he says. New child infants, he notes, make random actions that give them suggestions not solely in regards to the world however about their very own our bodies. He believes that some analogous approach would work for robots.

On the College of Oxford,

Ingmar Posner is engaged on a robotic model of “metacognition.” Human thought is commonly modeled as having two primary “methods”—system 1, which responds rapidly and intuitively, reminiscent of after we catch a ball or reply questions like “which of those two blocks is blue?,” and system 2, which responds extra slowly and with extra effort. It comes into play after we study a brand new activity or reply a tougher mathematical query. Posner has constructed functionally equivalent systems in AI. Robots, in his view, are constantly both overconfident or underconfident, and wish methods of understanding when they do not know one thing. “There are issues in our mind that verify our responses in regards to the world. There is a bit which says do not belief your intuitive response,” he says.

For many of those researchers, together with Hadsell and her colleagues at DeepMind, the long-term aim is “common” intelligence. Nonetheless, Hadsell’s concept of a man-made common intelligence is not the same old one—of an AI that may carry out all of the mental duties {that a} human can, and extra. Motivating her personal work has “by no means been this concept of constructing a superintelligence,” she says. “It is extra: How will we give you common strategies to develop intelligence for fixing explicit issues?” Cat intelligence, for example, is common in that it’s going to by no means encounter some new drawback that makes it freeze up or fail. “I discover that stage of animal intelligence, which entails unimaginable agility on the planet, fusing completely different sensory modalities, actually interesting. You already know the cat isn’t going to study language, and I am okay with that.”

Hadsell needs to construct algorithms and robots that may be capable of study and address a wide selection of issues in a particular sphere. A robotic supposed to wash up after a nuclear mishap, for instance, might need some fairly high-level aim—”make this space protected”—and be capable of divide that into smaller subgoals, reminiscent of discovering the radioactive supplies and safely eradicating them.

I am unable to resist asking about consciousness. Some AI researchers, together with Hadsell’s DeepMind colleague Murray Shanahan, suspect that it will likely be unimaginable to construct an embodied AI of actual common intelligence with out the machine having some kind of consciousness. Hadsell herself, although, regardless of a background within the philosophy of faith, has a robustly sensible method.

“I’ve a reasonably simplistic view of consciousness,” she says. For her, consciousness means a capability to assume exterior the slim second of “now”—to make use of reminiscence to entry the previous, and to make use of creativeness to examine the longer term. We people do that effectively. Different creatures, much less so: Cats appear to have a smaller time horizon than we do, with much less planning for the longer term. Bugs, much less nonetheless. She just isn’t eager to be drawn out on the arduous drawback of consciousness and different philosophical concepts. In reality, most roboticists appear to wish to keep away from it. Kormushev likens it to asking “Can submarines swim?…It is pointless to debate. So long as they do what I need, we do not have to torture ourselves with the query.”

Pushing a star-shaped peg right into a star-shaped gap could appear easy, however it was a minor triumph for one among DeepMind’s robots.DeepMind

Within the DeepMind robotics lab it is simple to see why that kind of query just isn’t entrance and middle. The robots’ efforts to choose up blocks counsel we do not have to fret simply but about philosophical points regarding synthetic consciousness.

However, whereas strolling across the lab, I discover myself cheering one among them on. A pink robotic arm is attempting, jerkily, to choose up a star-shaped brick after which insert it right into a star-shaped aperture, as a toddler would possibly. On the second try, it will get the brick aligned and is on the verge of placing it within the slot. I discover myself yelling “Come on, lad!,” upsetting a raised eyebrow from Hadsell. Then it efficiently places the brick in place.

One activity accomplished, a minimum of. Now, it simply wants to hold on to that technique whereas studying to play

Pong.

This text seems within the October 2021 print challenge as “The way to Prepare an All-Objective Robotic.”

[ad_2]

Source